[Editor’s note: IMG MGMT is a semi annual image based essay series by artists.]

preamble:

this essay consists of a series of files taken from computer backups, and then uses them as a starting point for riffing on concerns about “medium” as they relate to the production of artwork with computers

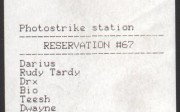

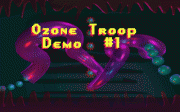

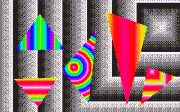

c:\G\ (1996)

c:\Desktop\images (1998)

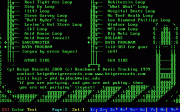

c:\Windows\Temporary Internet Files (1999)

c:\desktop\pbd\beige\random (2001)

f:\Documents and Settings\My Documents\IMAGE BANK\dog plates (2004)

c:\dos\graphics (2005)

like most everyone who reads this, i hold a huge number of image files stored on cd’s, hard drives, dvd’s, zip disks, floppies, usb keys and the like…and i don’t even consider myself particularly concerned with images. i searched through tens of thousands of them for this essay, all from backups i made of a previous computers’ hard drive when i got a new computer. i then selected some directories and simply displayed each file in each directory as they were backed up with slight adjustments where i removed duplicates or files that wouldn’t upload correctly.

the c:\Desktop\images (1998) directory seems to be almost entirely “random” internet images that must have struck me when i saw them. in contrast, every image file in the f:\Documents and Settings\My Documents\IMAGE BANK\dog plates (2004) directory is specifically related through subject and color, and it was obviously my intention to keep it contained in this way. the c:\G\ (1996) directory, the oldest one in this essay, contains results of interacting with a particular graphics software when i was in high school. “ozone troop” was my high school techno band, and “joeboy”, a character in William Gibson’s Neuromancer, my dj name at 16. created 9 years later, the c:\dos\graphics (2005) directory contains the backed up contents of the c:\G\ (1996) directory plus the results of returning to the same software when i made work for a show in 2005.i didn’t want to include anything too recent, like the contents of the \phd\ folder on my desktop that’s been staring at me for the past few years, as part of the pleasure in going through old backups is to look again. looking again at c:\desktop\pbd\beige\random (2001) directory is a saccharine dose of pure nostalgia for me, a time before i’d thought to consider how career and commodification might effect a collaborative practice.

you can see there is a distinct autobiographical element to these images, and perhaps a voyeuristic element for those viewers so inclined. however, those who wish to explore a more critical context might start at the decision to expand the post from my original idea of displaying just the c:\Windows\Temporary Internet Files (1999) directory. these are images that were stored without my intention, by a system of which i was mostly unaware, and only discovered on a backup cd years later.

in the context of images on art blogs or tumblrs, this initial idea would’ve sat nicely. it all looks a bit messy-formal, and one could ponder what these images depict and hence what i might have been doing so they were stored in the cache (searching for images of soccer players on rosh hashanah perhaps??). and since i didn’t intentionally store any of them, yet found myself retrieving and appropriating them years later, we’d get to play a game i like to call “fun with agency!”. most importantly, it has that certain knowing distance of a work created by some separate system or agenda — in this case a Microsoft technology running silently under my nose/fingers – which is reclaimed by the artist and displayed in a way unintended by either Microsoft, Intel, or any of the other various authors of the original images.

this all sounds defensible enough, contextually relevant and certainly easy to digest, and the more i thought about it, trite. to support an artwork of online file aggregation simply because it uses appropriated content is analogous to saying “i love this sculpture because it exists in three dimensions.” it’s only doing something that the thing in question already does by definition. sculptures are 3d and (i’m not really going out on a limb by suggesting that) large portions of the http protocol are designed to display and link content located and derived from other sources. so to me, to post up a bunch of images “reclaimed” from the man…errr, i mean discovered in a temporary cache…started to appear similar to lots of other artist led image aggregation that – as art – i don’t rate. dear readers, i really wanted to do something better for you.

so, what to do, as i still had a photo essay to sort out. i chose to expand the essay to include not only unintentionally stored and backed up files, but also files that i stored intentionally and backed up for a variety of reasons. some of them thematic, some of them psycho-sexual (the blue dog plates folder) but most for reasons i can’t really explain. this i think is helpful, because now we have a more interesting question to start from: an artist has made something entirely with a computer that isn’t a one-liner. how do we evaluate it?

here we are also presented with the twin threads of the photo essay: the hugely varying intentions behind the content and collection of each image file, and the single storage technology and mechanism of the backup. they are obvious threads in the sense that they would exist for every possible IMG MGMT: BACKUPZZ post i might have made: that mess of meaning, possibly non-computable, and the computable but increasingly intricate machine system that contains its symbols. but i don’t have much to say about the first thread — image files that i don’t even know why i posted. it’s probably not the greatest semantic thread in an artwork, although as an artist i’m lucky that not being able to explain parts of my work has little to do with its status as art. and as we all know a “viewer bringing their own meaning to a work” is a logical tautology – it’s true in all possible worlds, and hence all possible artworks. so in this case i’d have to say it’d be a pretty boring topic to write about, although i do enjoy looking at the images as i hope you do to.

what about the second thread? the guts, the means, the system, the material, the backup, the thing that it actually is made from. you know, “the medium”? this is what i’d like to consider.

although the concept of a medium is often stigmatized by it’s association with our long-departed notion of “medium-specificity”, in a general sense it has historically been useful towards the development of various evaluative criteria. a major difficulty for evaluation then is when computers confront our concept of a medium. i’m sure, dear readers, that you intuitively understand how this happens as it has been an ongoing part of the “new media” discourse for decades. but let me briefly illustrate it with a thought experiment:

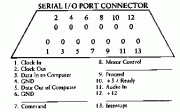

hypothetical artist A, let’s call her Paddy, sits down at a computer and generates a set of data – a file – as most all software does. maybe Paddy programmed in Java, maybe she used Microsoft Paint. it doesn’t matter. Paddy then translates this set of data so it controls the colors of static pixels on the computer screen — it generates a picture. Paddy doesn’t really like it on the screen, so she prints it out. she doesn’t like the printout either and she gets rid of it. Paddy then translates the same set of data so it controls the values of a digital to analog audio converter — it generates sound. she doesn’t like the sound, and she gets rid of that. finally Paddy translates the data so that it controls a sequence of pixels on the computer screen — it generates a “moving image”. Paddy likes that, and she shows it in a gallery. probably a non-profit.

now – what medium is Paddy working in? if someone said “data” would they be wrong? what about “moving image”, “code”, “computer”, “cinema” or even “print” or “sound” – based on the earlier attempts at a fixed piece? let’s suppose she liked the print and exhibited that – would her medium really be much different than when she exhibited the moving image considering that 99% of her work was done before she hit that final button that said PRINT instead of PLAY?

more to the point – why is it that a medium in Paddy’s process is vague to us (or is it just me)? one would think it’d be just the opposite as computers are so open to inspection. we can know everything about the functioning of Paddy’s work when she makes it on a computer, so shouldn’t we be able to at least identify what its medium is?

a simple way we can see the disconnect is how we discuss visual art displayed on/by a computer. you don’t often hear someone expressing appreciation for an “image” when viewing a painting, they usually appreciate the “painting”. yet how often do you hear appreciation for the “jpeg” when the thing people are looking at is a jpeg? not so much. we usually say “image”, and it’s reflective of how computer based art is removed from specific supports of the work when we discuss it.

we can see the disconnect more systemically is in our literature, which almost exclusively defines the computer as a type of hybrid media device or some sort of multimedia machine. in a typical article (and i need to apologize here for dropping an obscure reference – i only came across it because i’m doing my doctorate at the same institution and i think it’s a representative example), 2002 Whitney Biennial participant/game artist/professor Mary Flanagan devoted all of one sentence of her quite good PhD dissertation on feminist/activist computer gaming to the nature of computers. her single sentence described the computer as a “hybrid media” that contains many other, presumably more static, media. while we can easily debate the validity of Ms. Flanagan’s definition (which other media is it, exactly, that the computer contains and hybridizes?), what’s more important is how it represents the state of current discourse, academic or otherwise, which mostly ignores questions about what computers “are” and instead focuses intently on what they can “do”. hence, while there has been a surge in the number of critical and theoretical texts about computers in the arts, especially since the “new media” dawn of the early 90s, few if any have been true medium-centric investigations of how the computer functions in art practice.

so let’s think about it 🙂

i’m not sure anyone cares about Mcluhan‘s “medium is the message”, it seems to come back every few years and we might be in a downturn, but consider it for just a second: messages contain information (Shannon), and information has meaning (Floridi). mediums are messages (Mcluhan), therefore mediums have meaning. that’s easy enough. but now take a computer – it’s definition requires that it can be perfectly simulated, and it is limited only in that what it can contain must be computable (Turing)

(the range of things we know to be computable constantly grows, this year for the first time it includes Jeopardy champions). so how would we begin to define the message, and hence meaning, of this more or less universal medium?

obviously Mcluhan’s ideas have plenty of problems, i can remember LOLing when i read about “hot” and “cold” mediums years ago [the real Paddy asked why the LOL — i remember thinking that it contradicts Mcluhan’s claim that content doesn’t matter when he’s rating mediums based on how well we understand a given medium’s transmitted content]. but Mcluhan’s ideas are nevertheless helpful as a model for how we’ve thought about mediums, especially considering that some artists have explicitly claimed the computer as their medium for over 40 years (A. Michael Noll named the “digital computer” as his creative medium in 1967, i’m sure there are earlier examples).

however we have two basic problems. one – as our thought experiment shows pinning down the computer in terms of medium isn’t being done. two – medium-specificity, as an evaluative criteria, was destroyed by post-modernism. familiar examples of medium-specificity are Greenberg’s flatness, Kuleshov’s development of Soviet montage theory and the “Materialgerechtigkeit” (literally doing “truth to materials”) of mid 20th century architecture. but as we know, pomo demonstrated that ticking boxes A, B and C in your piece to show you’ve conformed to some accepted definition of how your materials should work really didn’t have a whole lot to do with how good your piece was.

well, why not get rid of mediums then? that’s exactly what happened next. negating a definition of medium, as Rosalind Krauss did, puts us in what she famously called the “post-medium condition” almost a decade ago. a combination of Ms. Krauss’ idea with our Mcluhan-based model of two paragraphs back suggests what? our messages no longer hold meanings? we no longer have messages? it’s an interesting contradiction, especially because in making my photo essay i found my backupzz to be bound to what i understood as the medium i was working with. a thing called a backup is what ties these images together, and i think my experience of using a computer and feeling like i’m working with a distinct medium and not just a simulation of another one is extremely common.

most of the “post-medium” type arguments rely on equating a medium with a material, suggest that digital technologies have stripped materiality from artwork, and conclude that as a result any relationship to the concept of medium must now be some weird anachronism. this is what theorist Mary Ann Doane does in her version of Krauss’s post-medium: “With the advent of digital media, photography, in particular, has seemingly lost its credibility as a trace of the real, and it could be argued that the media in general face a certain crisis of legitimization.” and specifically regarding photography, “”¦the digital offers an ease of manipulation and distance from any referential grounding that seems to threaten the immediacy and certainty of referentiality we have come to associate with photography”¦”. Mcluhan would certainly have agreed with that, but i’m not sure he would have concluded that digital photography has no medium, he’d likely have suggested it’s just a rather different one.

in the end what the “post-medium” argument puts forth is simply this: because of emulation, digital artifacts aren’t real. Krauss argues for the term “technical support” instead of medium for just this reason. yet while they easily notice emulation’s effects on cultural forms, no one in the post-medium room seems interested in discovering where emulation comes from or why it exists as a fundamental property of the Turing-machines (computers) that run our art. perhaps this is unfortunate, a reality defined by assumptions of what computers can’t yet do is a strange one. and instead of refuting the existence of any contemporary medium, in the sense that if a digital photograph isn’t a photograph it then has no ties to any sort of material, they might also consider a perspective where Turing-machines, as the vehicles of emulation, are themselves the medium through which artists are now working – perhaps even unaware.

so what does post-medium give in exchange? why the hilarity of Nicolas Bourriaud explaining the breakdown of mediums by writing about how artists are now really like dj’s, cutting and scratching their way through cultural artifacts to arrive at a historically informed practice of globalized sign manipulation! you know, like this. or maybe like this. so while it’s actually quite easy to agree with many of the tenets of the post-medium argument, and it is a pure pleasure to read someone like Rosalind Krauss’s writing, Bourriaud demonstrates that post-medium conclusions often sound just as silly as before. [Paddy asked me to discuss this more: it’s not really fair to interrogate an analogy, but as it’s all we have scope for consider how his notion of a DJ is romanticised: at the time Bourriaud wrote Postproduction the best DJ’s were universally acknowledged to be the DMC champions — `i.e. the ones with the most technical, material skills and not “semionauts”. also i would point to DJ Spooky that Subliminal Kid as the literal embodiment of Bourriaud’s analogy of a sign-spinner. Spooky is a truly abysmal DJ and while i’m sure he’s still off “remixing culture” somewhere right now as Bourriaud would have him do, does anyone really care? the old criticisms of “artists using signs” being nothing new (Bourriaud complains about this) and it being hypocritical to claim that someone like Tiravanija challenges “authorship” and “origin” in his work when he’s the only one who gets paid still stand.]

i actually don’t mean to be so terrible to Bourriaud; for me his analysis of individual artists worked up to a point and there’s no way he could have foreseen how the internet, the “central tool” of his remixed-up framework, would morph into a giant, continuous pay-per-click. his proposition that “Do-It-Yourself will reach every layer of cultural production” now seems strangely idealistic as our very idea of self becomes controlled by Google. so while on one hand Bourriaud was completely correct and ahead of the curve (for a curator) when in 2001 he described artists as working with “data”, on the other you might think it an opportunity for a medium, and a related art theory, to somehow be grounded in the connections to process, information, and logical truth that data as a form provides. when so many artworks are about systems, networks, interactivity or otherwise, you’d think that in order to truly place value on a system you’d want to understand it.

at this point i’m going to give a quick shout-out to everyone’s favorite common-sense philosopher Aristotle. in his Poetics, written 2,300 years ago, Aristotle invented art criticism and based much of it on a concept that we translate into English as “medium” (Butcher, 1902 translation). not only that, Aristotle was criticizing things like the rather immaterial art form of lyric poetry; that is to say an artwork consisting of a program of discrete instructions (lyrics and notes) stored in a digital format (an alphabet and music scale system), and executed (played) in real-time so that it does not exist as an object (immaterial). over two millennia later that sounds strangely familiar, doesn’t it? we can see then that for a long, long time material vs. medium either wasn’t an issue or was an issue that we were happy to ignore. computers didn’t bother the de-materialized art soup of the late 60s and 70s: there weren’t loads of computer art exhibitions, but there also weren’t loads of computers and the few exhibitions that did happen are now seen as seminal (Cybernetic Serendipity at the ICA, London in 1968 being an archetype). it’s only years later when computers go mainstream that they become a real problem regarding materiality and a “post-medium” view emerges.

of course the post-medium stuff hasn’t been the only attempt to theorize computer/medium questions. in the 90’s resurgent post-structuralist theory provided a lot of wishful thinking about what the internet was going to be (Paddy wants a reference here, so i suggest reading any critical text written between 1990-1994 that has the word “Hypertext” in the title). the early 2000’s brought a “new media” theory that slathered vocabulary and analysis of other media, usually film and cinema, onto computers i.e. something that ontologically is not film or cinema (Bolter – “remediation”, Manovich – everything). and all this against the backdrop of “computer art”, whose origin pre-dates both video art and conceptual art, but since then has been ghettoized into the various centres and festivals we’re familiar with, often deservedly so.

so here we are now, 2011, and the very idea of bringing up medium in relation to computer-based art makes me feel old. perhaps it is my own stupidity for being interested in medium. i hope it more likely that the easy solution was for ‘medium’, as a concern, to be largely glossed over in the production of contemporary art. no one is to blame for this given our current conditions; computers’ have a nebulous relationship to material, stifling mediocrity defines so much “new media” artwork of the past 20 years, and a general fearfulness of lapsing back into the old ways of “medium-specificity” pervades. but consider the effects of writing down an understanding of an artwork’s medium — its processes, what it is — as a component of evaluation. we simply can’t call bullshit like we used to. if i exhibit some computer-based work, and it actually doesn’t do what i claim, no one notices. likewise if i say something stupid about computers in an art context, who’s really going to call me on it? (quick example of the latter, and this book is full of them: in The Language of New Media, Lev Manovich writes that computers “rely uniformly on lossy compression” (pg.54) when using digital images. don’t tell that to anyone who’s ever made an animated GIF!).

what strikes me as bizarre, however, is how in the face of theory and practice that flaunt the concept to be irrelevant we still use our old word “medium” ALL THE TIME. the term has now become such a high-frequency, low-content phrase that we don’t even notice it any more, since its meaning has disintegrated. do we really only use the word historically then, just another 404 link to an art context of the past that should be binned? i want to say no for two reasons: one, the concept is still formative for artists, two, regardless of how the term “medium” has been used (Noel Carroll even suggests that the status of “film” as a specific fine art media was driven not only by the celluloid material, but by artists wanting jobs in subsequently-needed film departments) understanding an artwork’s means of representation – its medium – is a valid component of its evaluation. that is not going to change, ever, whether computers are involved or not.

one scene in which artists have been trying to crack the computer/medium question is the glitch/compression artifact/general fucking digital things up community. it makes intuitive sense that to investigate a medium, you might rip it apart — it’s worked that way before, anyway. i like a lot of this stuff, but a glitch isn’t anything more than any other computable action. we see the outcome of certain actions as glitch only because of the way various software or hardware constructs have become normalized in our lives. in any event, an artist using glitch as a way to explore some sort of “aesthetic of the medium” is not going to find anything. mediums don’t have aesthetics, people do. what glitch does do is remind us that meaning exists in a continual state of potential simulation. i happen to think this is important, and that once in a while we need the mirror shattered. glitch accomplishes this feat, despite its other limitations.

artists also explore the computer/medium question through interactivity — Dominic McIver Lopes recent book “A Philosophy of Computer Art” is even based on a definition that “computer art” must be interactive. what is this interactivity, then, that drives the work and arguably give it meaning? Claire Bishop offers a textbook definition:

“Many artists and critics have argued that this need to move around and through the work in order to experience it activates the viewer, in contrast to art that simply requires optical contemplation (which is considered to be passive and detached). This activation is, moreover, regarded as emancipatory, since it is analogous to the viewer’s engagement in the world. A transitive relationship comes to be implied between ‘activated spectatorship’ and active engagement in the social-political arena.” (Bishop, Installation Art)

but take two of Rafael Lozano-Hemmer’s Relational Architecture works, one produced in Yamaguchi, Japan in which SMS messages are converted to control signals for spotlights, and one in Toronto, Canada which senses the heart-rates of passersby to do the same thing. what is interactive in any meaningful way here? imagine being in Yamaguchi, sending a SMS, and seeing the spotlights move. now imagine trying to relate those movements to your SMS among hundreds. difficult to do. now imagine the work with no one participating at all — the spotlights still look just as awesome. now imagine being in Toronto and wandering by a sensor and seeing some other spotlights move. could you tell a difference between a heart-rate spotlight and an SMS spotlight? it’s hard to see what’s interactive about a system that runs with or without you or where any composed input is aesthetically indistinguishable from simple randomness. what happens in reality is that the ‘activation’ Lozano-Hemmer’s work is designed to provide is meaningless.

there is no interaction with the system that a viewer can have that’s unique to that viewer, and the importance of a piece based on interactivity seems to fade away when you can’t distinguish your own actions from noise. i think the real question to ask is: even with this interactivity being meaningless, would these works have been funded without it? this demonstrates a limitation that we can intuitively detect in “interactive” work but is difficult to make sense of: just because the thingamabob moves when you step in front of the webcam, it doesn’t actually mean the piece is interactive. when the system is a one-liner, bothering to trigger it is “analogous to a viewer’s engagement with the world” (Bishop) only so far as any engagement with our Western social-political arena is already pretty darn empty. but i don’t think that’s what Lozano-Hemmer means.

so to summarize what’s been laid out so far:

– medium is a well-worn concept and we use the word in imaginative ways, but computers going mainstream still freaked people out because one single computer can emulate multiple media

– new media is wishy washy and easy and relies on critical frameworks that were developed for other media to discuss computers

– post-medium is interesting, but doesn’t allow for the possibility that Turing-complete machines (computers) could themselves be a medium

– as they both either refute the idea of medium, or refuse to explicitly define computer as one, they fail to address unique ontological issues that arise when using a computer to produce art

– artists are giving it a crack, glitch and interactivity being two ways of looking at computers, although both have limitations

– in general we’re losing a way to talk about computer based work (even popular artfagcity subjects like dump.fm and gifs which aren’t explicitly “about” their computer-based nature)

– i went to art school

taking all that on board, i’d suggest that some kind of “Turing-informed” framework might be a fruitful option. specifically, a perspective from which we apply knowledge of an artwork’s computational processes to it’s reading as an artwork. we do some of this naturally anyway, two examples i can think of are the XYZ art thread from a few years back and last year on this blog with mashups. i don’t think a Turing-informed perspective is the only one to use, and i also don’t mean something “Turing-informed” in the ars electronica/ITP/eyebeam-funded “i’ve made a crowd-sourced and/or arduino controlled piece of pop culture ephemera with no endgame” sense. rather, i mean that by foregrounding the nature and functions of these machines and systems and showing how we can make them intrinsic to our interpretations of work, we create a very powerful tool. with computers as a medium being something artists have pursued for 4 decades, this also isn’t a new idea. an even better example might be Noah Wardrip-Fruin, co-editor of the New Media Reader (a book i would have killed for when i was an undergrad) who wrote in 2006 that “we are only beginning to consider how we might interpret such [computational] processes themselves, rather than simply their outputs”. five years later – an age in internet time – it still rings true.

Paul B. Davis is an art lecturer at Goldsmiths College, and PhD candidate at Central St. Martins. Paul pioneered hacking techniques and video games as fine art and formed the programming collective BEIGE in 2000. He writes his own operating systems and video codecs as art, and works in video, performance and sculpture with every piece having a computer in it somewhere. Paul is represented by SEVENTEEN Gallery in London and his thesis is titled “Turing-completeness as Medium: Art, Computers and Intentionality”, as you probably could have guessed. Read his full bio here.

{ 6 comments }

Oh my god I love you. It’s all true. Let’s be friends.

I love it. Why do I get 404 instead of full size images ?

Favorite thing on your site evar.

When i read your article it takes me back to every saturday I go home and walk to synagogue with my dad, we meet old women on the way and Florence asks me what kind of art I am making, you are still painting, right? I have never painted, but to tell her that I do installation would go right over her head, not because Florence wouldn’t understand it but because she never heard of it.

What words do you use to talk about computer art? you have to be creative. We should start talking about how many clicks it took to make a microsoft paint picture, and make up fancy new words too.

HooHoo, have you seen this Dutch video series that shows artists (installation, performance, etc.) trying to explain their work to their parents? http://vimeo.com/13508636 I think that might apply to what you’re talking about.Â

Very interesting essay, also, btw. Looking forward to reading more. I could read a whole book of this.

tl;dr

Comments on this entry are closed.